Transformation of the Brain-Score leaderboard into a dynamic scientific exploration tool for understanding AI and brain alignment.

Overhauling the Brain-Score Leaderboard | Release Notes

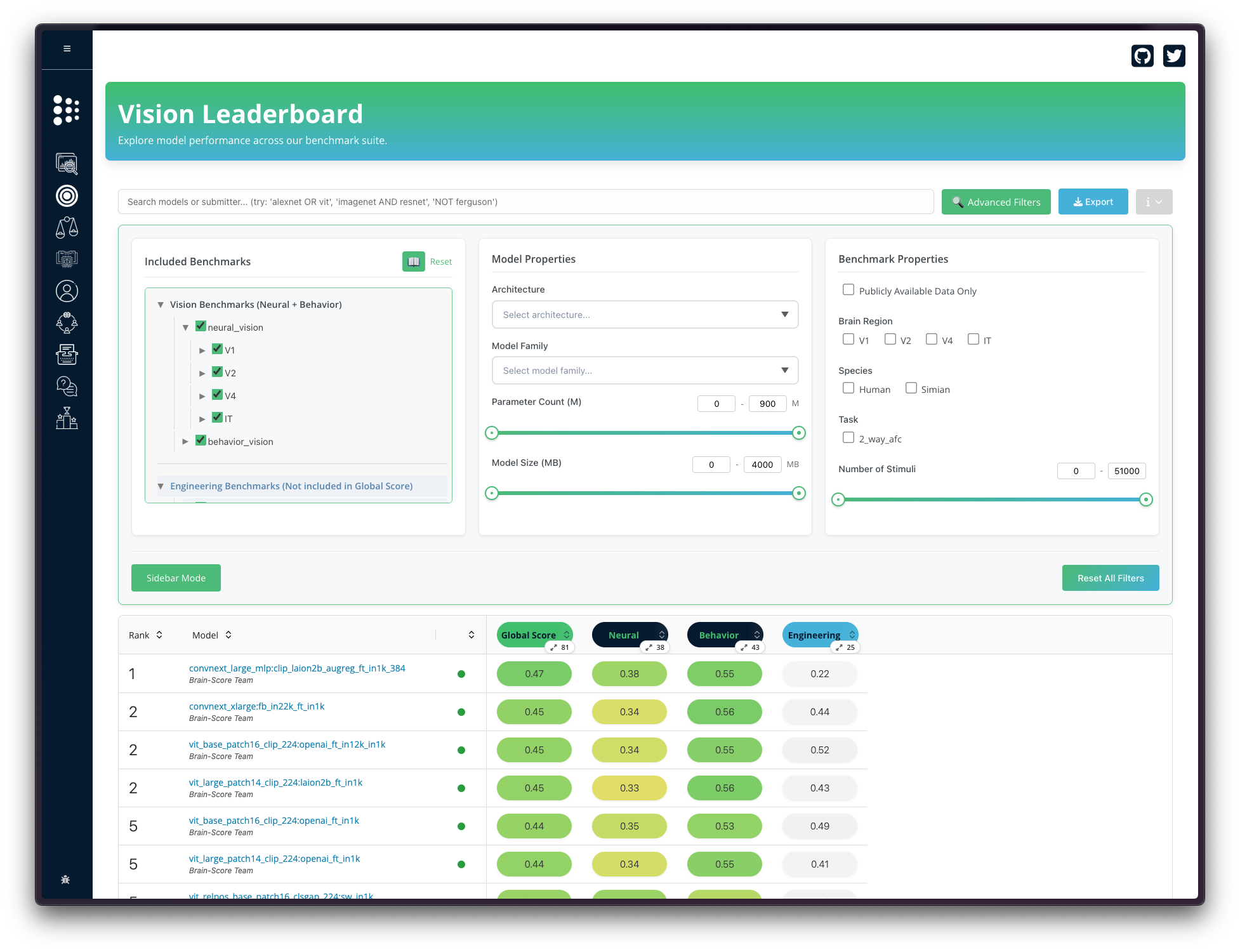

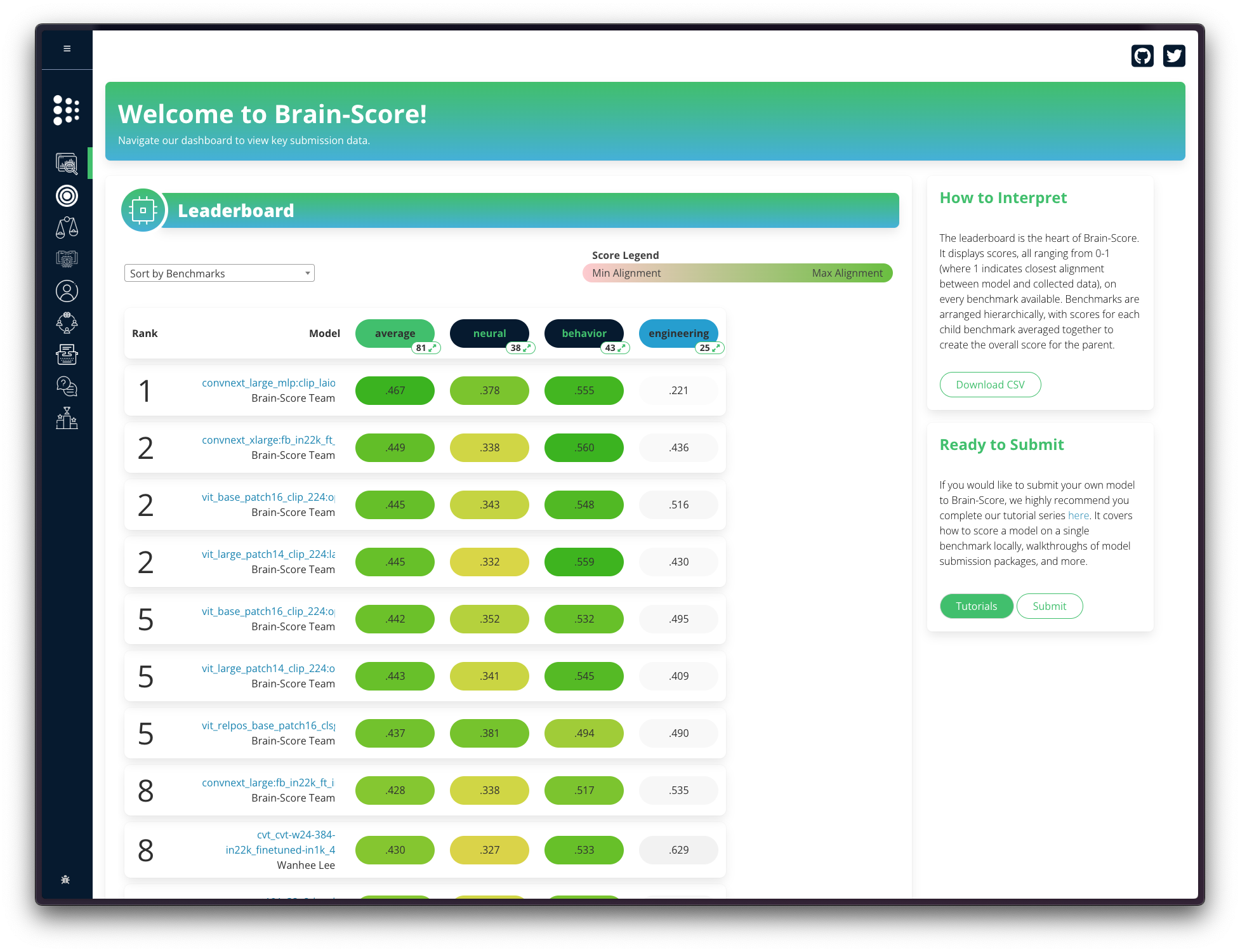

Brain-Score is the benchmarking platform for evaluating how well AI models match biological neural responses and behaviors. A fundamental service to the community is the leaderboard: an interface enabling researchers to compare model alignment across dozens of behavioral and neural benchmarks, currently spanning vision and language domains.

As the platform expanded to hundreds of models and increasingly complex benchmarks, we recognized that our community needed more than just ranking. Researchers want to know what is happening under the hood.

To address this, we've recently transformed the leaderboard from a passive display of information into a powerful exploratory tool, designed to help the community answer deeper scientific questions with ease.

What's New

The redesigned leaderboard shares the aesthetic and familiarity of the old leaderboard with an added layer of interactivity and customization that transforms it into a scientific tool:

-

Interactive filtering and search - Narrow the search space of models by architecture type, parameter size, training data, or benchmark task directly in the browser. Instantly compare transformers against CNNs on vision tasks, figure out which regions and benchmarks are most challenging, and if model size really matters for alignment.

-

Customizable scoring - Have a specific research question? Go beyond the default aggregate score by selecting which benchmarks to include or exclude and instantly re-weight results.

-

Wayback machine - Travel back in time through the leaderboard using an interactive date slider. See models and scores as they existed at any historical point, track how rankings have shifted over time, and observe how newly submitted benchmarks and models have reshaped the landscape.

-

Sharable, persistent views - Every filter or configuration generates a unique URL, enabling you to:

- Share a link with your collaborators and see exactly the same filtered view.

- Bookmark a configuration to revisit later.

- Embed reproducible views in papers and talks.

-

Integrated citations - Instantly export a BibTeX list for all plugins (benchmarks & models) relevant to your current view, that can be pasted directly into your reference manager, simplifying attribution and ensuring contributors receive proper credit. Please remember to cite all individual contributors; this feature should make it very easy.

-

Public/private view toggle - Switch seamlessly between viewing only your private models and comparing them against all public models from within your profile leaderboard.

Why It Matters

These new features make Brain-Score more interactive and improve interpretability via customizable comparisons across all public benchmarks and models submitted to the platform. This will enable rapid (initial) answers to questions such as "Which types of models are most brain-like?", "In which tasks do models differ from human behavior?", "What aspects of V1 processing are most difficult?" and so forth. We hope that this means the leaderboard isn't just a scoreboard, but now also a tool for probing relationships between models and the brain.

What's Next?

We are actively extending the platform:

-

Benchmark metadata expansion - Currently, the available metadata is limited. We will be introducing more fields which will allow fine-grained slicing of benchmarks.

-

Streamlined benchmark submissions - We are working on enabling benchmark submissions directly via the website, alongside metadata extraction. This will lower the barrier for labs to contribute datasets and broaden the benchmark coverage.

Interested In The Technical Blog? For implementation details keep an eye out on our technical blog. Contributions are welcome on GitHub.